Cross-Stream First-Touch Attribution with Google Analytics 4 and BigQuery

The purpose of this post is to share how you can use BigQuery to create a first-touch attribution model for a Google Analytics 4 property that crosses multiple streams. I've delayed writing this post because it solves a very specific problem that not everyone will have, but I am publishing it now because I expect this will be very useful for those who need it.

The Problem

Imagine that you have designed a web application, and you have built a website to promote your app. Users visit the website first to learn about your app, and then exit the site to launch the app. In most cases you will create a separate stream for the application and the website.

Now that your app and website are built, you decide to advertise on Facebook. Here is the scenario that a user will experience when they interact with your Facebook ads and the problems that you will encounter:

Sample stream flow

STEP 1) The user clicks the Facebook ad from their mobile device and lands on the website.

| Stream | WEB |

|---|---|

| Client ID | 123 |

| User ID | (not set) |

| User Acquisition Source |

STEP 2) During the same session, the user clicks the link to enter the application and create a free trial account.

| Stream | APP |

|---|---|

| Client ID | 123 |

| User ID | ABC |

| User Acquisition Source | Your Website |

| PROBLEM 1 |

|---|

| Hopefully Google will resolve this in a later release, but right now Google Analytics 4 streams will calculate the User Acquisition Source for each stream, even when the Client ID does not change! |

STEP 3) After the free trial ends a week later, the user decides to purchase a subscription to your application. However, like 80% of users on the Internet (I made that stat up, but I stand by it), the user chooses to sign in on a desktop device to complete the transaction rather than the mobile device previously used.

| Stream | APP |

|---|---|

| Client ID | 456 |

| User ID | ABC |

| User Acquisition Source | (not set) |

| PROBLEM 2 |

|---|

| Google Analytics 4 will attribute the transaction to the User Acquisition Source "Direct" or "(not set)", when the user was actually originally acquired by the Facebook Ad. |

In a perfect world, the User Acquisition report would display one user with a conversion, who was acquired through Facebook. Instead, you’ll see multiple users with incorrect conversion attribution.

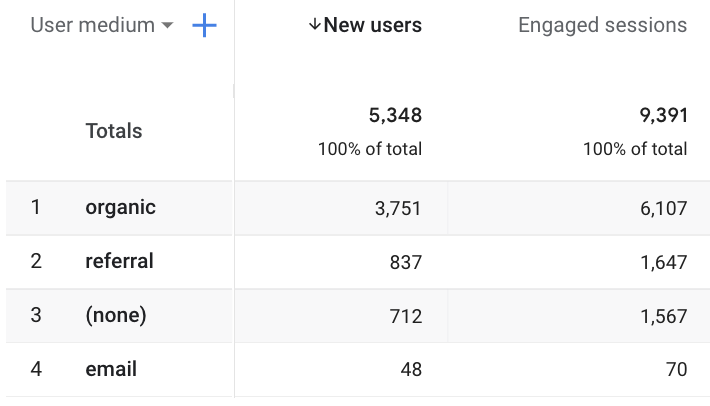

User attribution

BUT… all the data to resolve this problem exists in BigQuery (if you haven't set up the BigQuery integration, take a look at THIS article).

The Objective

So, the goal here is to use the Client ID (set by a cookie to identify the device) and the User ID (set only when the user is authenticated) to identify the first User Acquisition Source generated by this user, and attribute the transaction to that source.

| IDENTIFIERS |

|---|

| For a refresher on the difference between Client ID, Pseudo ID and User ID, check out THIS article. |

We will do this in a BigQuery table, so that you can read the data into an accurate User Acquisition report created in your favorite BI tool (Data Studio, Looker, Tableau, etc).

The Solution

The solution to this problem has 3 steps, and you can find the code samples for each below.

Table #1: First Touch by Pseudo ID

Create a table that displays the original acquisition channel and first visit timestamp for each user_pseudo_id (for a web stream this is the "Client ID"). Example:

| user_pseudo_id | medium | campaign | source | first_visit_date |

|---|---|---|---|---|

| 123 | Social | FB Ads | 2021-01-01 12:00:00.000000 UTC | |

| 456 | Referral | (not set) | Your Website | 2021-01-01 12:30:00.000000 UTC |

Table #2: User ID to Pseudo ID Map

Create a second table that displays each user_id (set only while the user is authenticated) and a list of user_pseudo_id's that have been associated with it. If a user has authenticated on two devices like the example above, they will have one user_id and two user_pseudo_id's. Example:

| user_id | user_pseudo_id |

|---|---|

| ABC | 123 |

| ABC | 456 |

Table #3: First Touch by User ID

Finally, you use the two tables above to create the final table. This table pulls the user_id and user_pseudo_id from table #2, and then uses the user_pseudo_id to join it with the columns in table #1. It then orders the sessions by the first_session_date and drops all except for the first.

The result is a table where each user_id appears in 1 row, and the columns describe the acquisition source for that user even if they did not authenticate and create a user ID until a later session (maybe even on a different device). The table looks like this:

| user_id | first_pseudo_id | first_visit_date | first_medium | first_source | first_campaign |

|---|---|---|---|---|---|

| ABC | 123 | 2021-01-01 12:00:00.000000 UTC | Social | FB Ads |

The Code

Here is how the sausage is made.

Table #1: First Touch by Pseudo ID

Add your project ID and dataset to the query below and run it. If you have a lot of data you'll want to limit this by date range (maybe last 3 or 6 months). Have the results written to another table in the same dataset called "first_touch_psid".

SELECT * FROM(

SELECT

user_pseudo_id,

traffic_source.medium AS medium,

traffic_source.name AS campaign,

traffic_source.source AS source,

TIMESTAMP_MICROS(event_timestamp) AS first_visit_date,

ROW_NUMBER() OVER(PARTITION BY user_pseudo_id ORDER BY event_timestamp) AS row_n

FROM `<project id>.<dataset>.events_*`

WHERE

event_name = "session_start"

) WHERE row_n = 1After the initial table is built, you'll need to run a scheduled query every day to update it with the prior days data. To create the scheduled query, you'll modify the query above to add two items to the WHERE statement:

WHERE

event_name = "session_start"

AND _TABLE_SUFFIX = FORMAT_DATE('%Y%m%d',DATE_ADD(CURRENT_DATE(), INTERVAL -1 DAY)) # Set date dynamically to prior date

AND user_pseudo_id NOT IN (SELECT user_pseudo_id from `<project id>.<dataset>.first_touch_psid` GROUP BY user_pseudo_id)Table #2: User ID to Pseudo ID Map

Next, create your table to store an ID map. You can also run this for the prior 6 months or so at first and then schedule a daily query to update it.

SELECT

user_id,

user_pseudo_id

FROM `<project id>.<dataset>.events_*`

WHERE user_id IS NOT NULL

GROUP BY 1,2

ORDER BY user_idTable #3: First Touch by User ID

The final query should also be scheduled to run daily and completely overwrite the prior table every time it runs.

SELECT * FROM (

SELECT *,

ROW_NUMBER() OVER(PARTITION BY user_id ORDER BY first_visit_date) AS session_num

FROM(

SELECT

user_id,

user_pseudo_id AS first_pseudo_id

FROM

`<project id>.<dataset>.uid_psid_map`

) AS uid_psid

JOIN (

SELECT

user_pseudo_id AS first_pseudo_id_2,

first_visit_date,

medium AS first_medium,

source AS first_source,

campaign AS first_campaign

FROM

`<project id>.<dataset>.first_touch_psid_map`

) AS psid

ON uid_psid.first_pseudo_id = psid.first_pseudo_id_2

)

WHERE session_num = 1And that's it. Once your queries are scheduled you can connect your BI tool to table #3 and start making smarter decisions on where to spend your advertising budget.

Other Resources for BigQuery and GA4

Here are a few other posts that I’ve created for using BigQuery with data from Google Analytics 4:

- Cross-Stream First-Touch Attribution with Google Analytics 4 and BigQuery

- How the WITH Clause Can Simplify Your SQL Queries

- BigQuery + GA4: How To Get the First or Most Recent Event for a User

- How to Use the Over clause to Create a Moving Average in BigQuery

- Data Studio + BigQuery + GA4: Viewing a Parameter Across Multiple Events

- Data Studio + BigQuery + GA4: How to View Multiple Parameters on the Same Event

- How to Flatten a BigQuery Table with UNNEST